This is a very short post that will be very useful to help you quickly set up your COVID-19 datasets. I’m sharing code at the end of this post that scrapes through all CSV datasets made available by COVID19-India API.

Copy paste this standalone script into your R environment and get going!

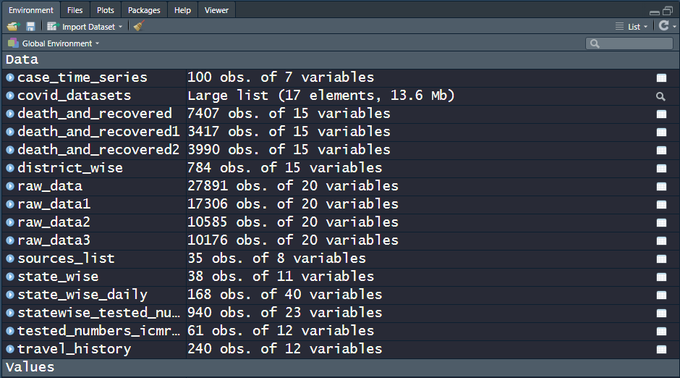

There are 15+ CSV files on the India COVID-19 API website. raw_data3 is actually a live dataset and more can be expected in the days to come, which is why a script that automates the data sourcing comes in handy. Snapshot of the file names and the data dimensions as of today, 100 days since the first case was recorded in the state of Kerala —

My own analysis of the data and predictions are work-in-progress, going into a Github repo. Execute the code below and get started analyzing the data and fighting COVID-19!

| rm(list = ls()) | |

| # Load relevant libraries ----------------------------------------------------- | |

| library(stringr) | |

| library(data.table) | |

| # ============================================================================= | |

| # COVID 19-India API: A volunteer-driven, crowdsourced database | |

| # for COVID-19 stats & patient tracing in India | |

| # ============================================================================= | |

| url <- "https://api.covid19india.org/csv/" | |

| # List out all CSV files to source -------------------------------------------- | |

| html <- paste(readLines(url), collapse="\n") | |

| pattern <- "https://api.covid19india.org/csv/latest/.*csv" | |

| matched <- unlist(str_match_all(string = html, pattern = pattern)) | |

| # Downloading the Data -------------------------------------------------------- | |

| covid_datasets <- lapply(as.list(matched), fread) | |

| # Naming the data objects appropriately --------------------------------------- | |

| exclude_chars <- "https://api.covid19india.org/csv/latest/" | |

| dataset_names <- substr(x = matched, | |

| start = 1 + nchar(exclude_chars), | |

| stop = nchar(matched)- nchar(".csv")) | |

| # assigning variable names | |

| for(i in seq_along(dataset_names)){ | |

| assign(dataset_names[i], covid_datasets[[i]]) | |

| } | |